Are leaders in danger of losing their jobs to automation? Don’t look now, but robots are rapidly learning how to become better bosses.

By Peter D. Harms, Ph.D.

The videogame 7 Billion Humans begins with the premise that all jobs will someday be performed by robots. The result is a dystopia where the bots provide unlimited energy, transportation, and food, but the humans are deprived of purpose and begin demanding “good-paying jobs.”

The game is supposed to be funny, but no one’s laughing about this increasingly plausible future. McKinsey & Company has estimated that by 2030, approximately 20 to 30 percent of all jobs will be impacted or eliminated by automation. That starts with simple tasks—picture robots hanging drywall—and will soon evolve to more complex jobs.

Sign up for the monthly TalentQ Newsletter, an essential roundup of news and insights that will help you make critical talent decisions.

Companies like Amazon, for example, now have tens of thousands of robots working in warehouses sorting and selecting products for shipment, displacing thousands of warehouse workers. Then there’s the potential loss of more than 3 million transportation jobs that could disappear when fully automated vehicles are deemed safe enough for widespread use.

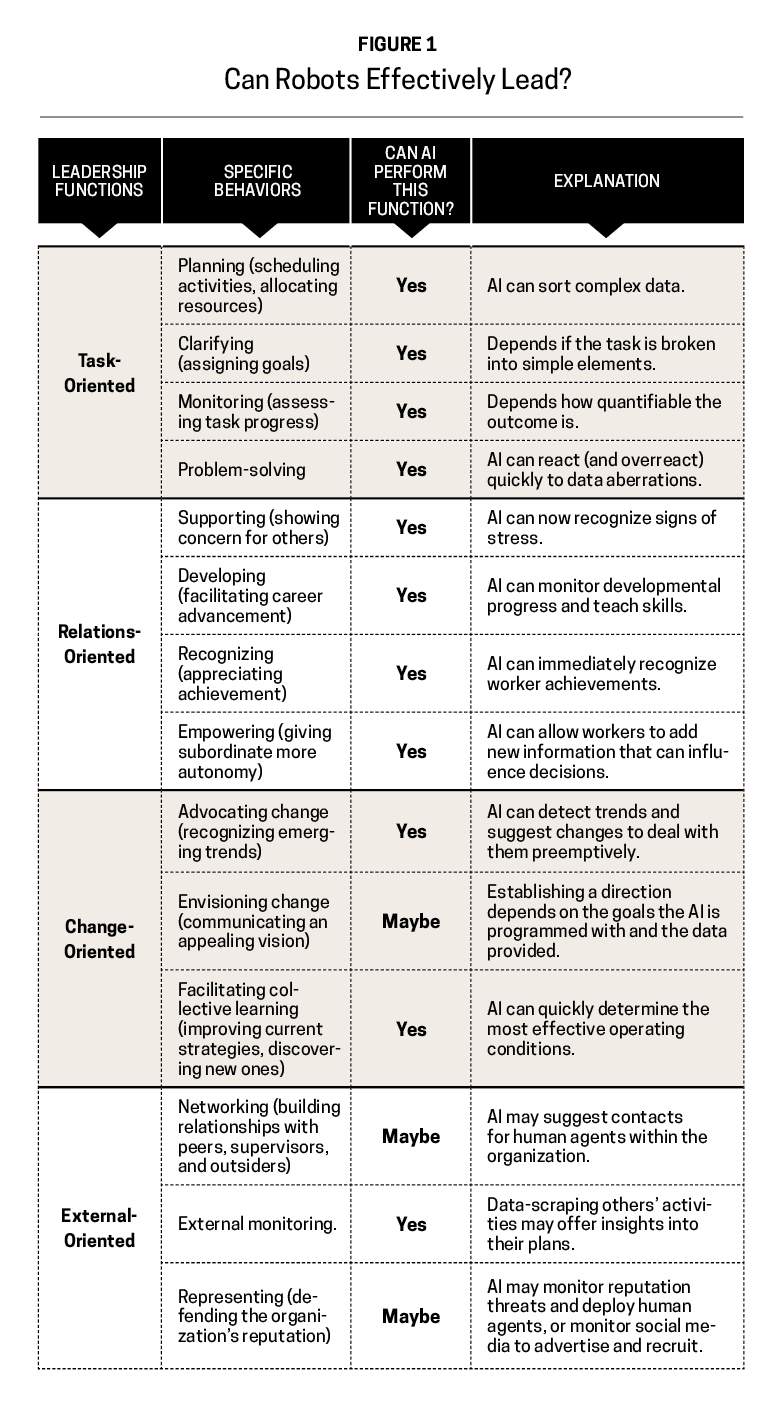

So where does that leave the leaders? Top bosses who heavily rely on their creative and social—read: human—skills rarely seem to worry about losing their jobs to robots. But make no mistake: Artificial intelligence (AI) is constantly evolving. Just look at the framework of leadership developed by University of Albany psychologist Gary Yukl in Figure 1 and you’ll see that AI can perform nearly all the basic functions of leaders, with a few caveats and exceptions.

How Robots Are Becoming Leaders

Robots should prove to be particularly adept at handling task-oriented functions related to monitoring performance, supply chains, and deploying resources where needed. Much of this harkens back to Frederick Taylor’s scientific management approach. Worker functions need to be broken into simple functions that are easily assessed and monitored.

One function, handling a crisis, still presents problems because such instances can rarely be programmed and predicted. Consequently, there is always a chance that AI-driven decisions may overreact before human agents can stop them. We see instances of this in stock market flash-crashes where multiple AI trading platforms digest bad news or negative signals and make the situation worse by dumping stocks all at once.

You might be surprised that robots can also perform relationship-oriented functions. But AI has been able to read human emotional expressions for over a decade, and scientists working with NASA are actively exploring many passive techniques for assessing worker well-being, like wearable devices.

AI-driven devices can do more than just monitor humans’ well-being. Scientists designed Kirobo, a robot sent to the International Space Station, to interact with astronauts in order to reduce loneliness. In terms of developing followers, an AI-driven robot named Bina48 has successfully taught ethics and military theory courses at West Point. It’s reasonable to suggest that monitoring worker skills and achievements to guide such training modules shouldn’t be difficult.

But empowering workers seems like a far stretch for AI. While a well-designed algorithm is intended to replace humans’ error-prone and biased decisions, scientists could design a learning algorithm to take input from human operators who may notice aspects of the environment that are important to success.

Change-oriented leadership may also prove more difficult for AI. Robots can detect patterns that humans overlook, but they’re often programmed with a singular goal in mind. They maximize their output using the information they’re given. For now, creating new innovating remains a competitive advantage for humans.

John List, Lyft’s chief science officer, argues that local regional managers are necessary to supplement the algorithms that organize his company because they have a better sense of local conditions, culture, and the nature of the workers. The managers can deploy resources in novel ways to experiment with improving recruitment and retention, and the bots can then monitor such experiments and determine their effectiveness.

While robots haven’t yet mastered how to communicate an inspiring vision to motivate workers, they’re getting closer. Leadership scholars have extensively studied how language is used to create perceptions of charisma and feelings of collective will.

Paired with sophisticated avatar technology, scientists believe realistic proxies for human leaders can be developed. It’s even possible that a “leader” can eventually be designed for a particular follower based on their own unique characteristics and preferences. But for now, many robots are already able to deploy persuasive or emotion-laden messages to gig economy workers to convince them to work longer or harder.

Last, developing interpersonal networks is still solely our domain. But robots can nonetheless monitor social network traffic and news, view internal and external communications, and suggest places or audiences where human agents acting on behalf of the company might be most meaningfully deployed.

What Happens to the Humans?

As many of these examples suggest, AI will probably function best when it’s used to supplement leader functions. That means absorbing, integrating, and making suggestions for deploying human workers where they will be most effective.

And we can’t just be the eyes and ears of robots—we must also be their conscience. Algorithms will only do what they’re told and only use the data provided. Consider recent stories about Amazon and other companies trying to utilize AI for HR functions: The algorithms see trends, like a historical lack of women or minorities in a particular type of job, and come to the conclusion that such individuals are therefore undesirable in those job roles and begin screening them out. A human would (hopefully) know better than to arrive at that conclusion.

Utilizing AI for managerial and leadership functions presents some amazing opportunities in the future, but some major downsides, too. Although the incentives seem to suggest that we plunge forward, we’re right to proceed with caution. Organizations need to recognize that algorithms will optimize whatever function they’re given.

For many years, Uber and Lyft drivers were all but forced to take undesirable jobs, lest they be kicked off the platforms. Research showed these drivers reported high levels of stress and dissatisfaction with the algorithms and, as a result, the companies experienced extremely high levels of turnover. Although both organizations have since ended this policy, it proves what an algorithm will do if it’s told to maximize efficiency from a revenue perspective without also being told to consider the potential turnover costs.

Similarly, the stories you hear about Amazon warehouse workers peeing in bottles and delivery drivers racing to dump packages so they don’t miss performance targets illustrate how robots programmed for efficiency can undermine human dignity, lead to unhealthy levels of stress, and have dangerous or fatal consequences. If the organization finds values like safety, well-being, customer satisfaction, diversity, and ethics important, then it must incorporate them into the algorithms making the decisions.

When Elon Musk was faced with production delays at Tesla, a company designed to maximally exploit automation and AI, the CEO called humans underrated. Maybe this foreshadows a future where humans and robots can successfully find a way to work together.

Then there’s 7 Billion Humans, which (spoiler alert) ends with the robots forcing the humans to think more analytically and creatively and realize their hidden abilities. We can only hope that in the future age of algorithmic leadership, AI is used to optimize well-being and maximize human potential.

Peter D. Harms, Ph.D., is an associate professor of management at the University of Alabama. His research focuses on the assessment and development of personality, leadership, and psychological well-being. In addition, he is currently engaged in research partnerships with the U.S. Army and NASA.